news

Exploration of a Platform for the Utilization of Artificial Intelligence in Semiconductor Manufacturing

Series 1. Platform for Machine Learning

IVWorks combines epitaxy technology with artificial intelligence (AI) to provide differentiated, high-quality Epi wafer foundry services. Three articles are presented in this series in which we divide semiconductor manufacturing and AI series into data, model, and platform, respectively. Let us explore the appearance of a semiconductor from the perspective of a researcher directly involved in DOMM AI Epitaxy System research.

Have you ever wondered how the messaging application KakaoTalk works? Several computers at the data center (a building or facility that maintains server computers and network lines) at the headquarters of Kakao Corporation are vigorously working to connect the KakaoTalk app installed on your smartphone with the KakaoTalk app on the phone of your friend. The application software installed in these computers receives and processes messages, and then delivers the messages to the recipient. The combination of the software and computer that provides the foundation for running the application software is commonly referred to as a platform. In English, a platform generally represents a raised surface used by passengers to board or deboard trains. Application software can be considered as trains, such as Korea Train Express, Saemaul, or Mugunghwa, and a platform helps the application software to run smoothly.

To develop artificial intelligence, data collection, preprocessing, data analysis, and data learning are performed constantly to construct and adjust a model. Hence, automation of a series of tasks is essential for enhancing efficiency; moreover, the platform that provides the foundation for the automated application software performs a crucial role. In this article, we examine Kubernetes, which is a frequently used machine learning platform.

Kubernetes, a powerful platform supported by enthusiasts around the world

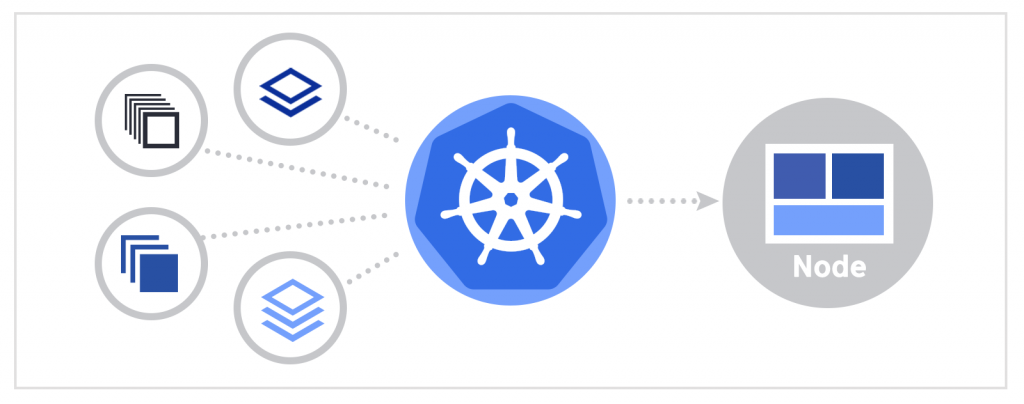

Data centers in companies such as Naver Corporation and Kakao Corporation have tens of thousands of computers in operation. However, Google, one of the few companies with hundreds of thousands of computers worldwide, recognized the requirement for identifying a better way of deploying and managing application software and computers. Following Borg and Omega, which are systems internally developed by Google, Kubernetes was released in 2014. Kubernetes is an open-source software system that makes it easy to deploy and manage application software. The word kubernetes implies pilot or helmsman in Greek.

Why do we require Kubernetes?

There are several reasons for the utilization of Kubernetes. First, in the past, application software was developed as a single unit; however, in recent years, a system has been developed by splitting a single application software into several smaller units. The former is called monolithic application software and the latter is called a microservice. In the case of the monolithic application software, the entire application software must be newly built and deployed to the computer when there is even a minor modification. This results in increased system complexity as the boundaries between components become obscure and interdependence constraints occur over time. In addition, there is a limitation in scaling the entire system when the number of users increases. To address these issues, a microservice system, composed of small components that are independently uploaded to computers, was developed. As the system consists of partitioned components, a partial modification can be deployed to only the corresponding microservice. In addition, if the utilization of a particular component increases, only that component can be considered for scaling. However, it may become increasingly difficult to determine where to deploy each component in a microservice system.

Second, microservices provide a consistent environment for application software. Application software programmers first develop the application software on their own computers and then, through a series of processes, upload the application software on to the computers that are actually used for services. Problems may occur if the environment of the computer providing the actual service is different from that of the developer. Additionally, the environment of the computer that provides the actual service may change as it operates.

However, complications can be avoided if the operating system, library, system configuration, networking environment, and other conditions pertaining to the computer are all executed under the same environment. Problems could be further reduced if the computer environment does not change significantly during the operation.

Third, the work approach has changed with continuous deployment. In the past, the development team created an application software and the operation team deployed it to the computer and managed it. However, recently, it has been considered that it is more efficient for the development team to take responsibility for the deployment and management of application software. However, sometimes, the development team lacks an understanding of the computers and infrastructure on which the actual services are provided. Therefore, peoples have identified a way for the developer, who does not necessarily know the computers and infrastructure well, to deploy the application software directly, without involving the operation team.

A system such as Kubernetes can deal with all these changes and problems. Kubernetes determines and manages the location for the deployment of microservices. It also provides a consistent environment for application software. Finally, it virtualizes physical resources and provides them in a platform to help the development teams to continuously deploy and manage application software.

Efficient method for deploying application software : container

What comes to your mind when you think of containers? One might think of shipping containers on cargo ships. As microservices became popular, information technology developers began to service their application software in containers, which is similar to the transportation of products by export companies in shipping containers. For microservices, developers first place the application software in a container, and one or more containers are bundled and deployed to computers for actual service.

The application software and required libraries and application dependencies are packaged together in containers so that the application software can run in isolation. This isolation makes it easy to continuously deploy container-based application software in any environment, from Linux to Windows, virtual machines, data centers, cloud services, and MacBook laptops of developers. Containerization also enables a clear line of work. This implies that each developer can focus on the logic and dependencies of the application software they are responsible for, without considering other application software. The operation team is only required to supervise the overall deployment and management and can ignore the finer details. The previously described Kubernetes is a software system that results in easy deployment and management of containerized application software.

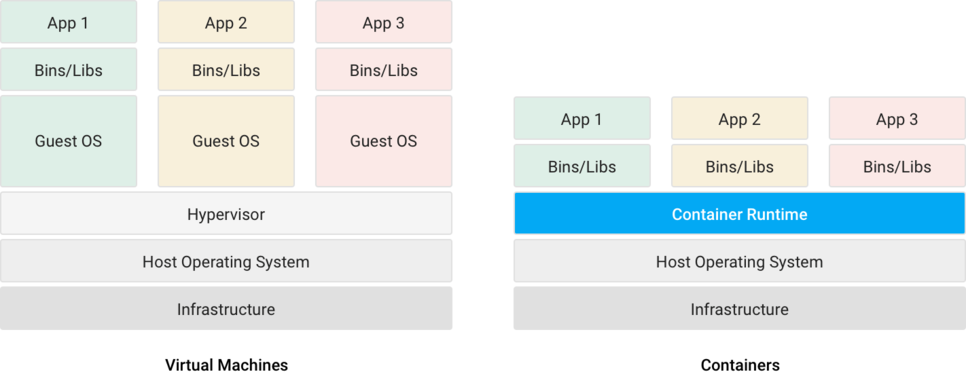

Containers allow multiple applications to be executed on the same computer and enables the creation of a separate environment that is optimized for each application software. Thus, it provides a logically isolated environment from other application software. A virtual machine performs the same task, but containers are lighter in weight and more portable than virtual machines. If you have ever used an emulator (a software program that virtually implements a classic game that can be played on a computer) to play a game on a discontinued game machine, you know how slow the virtual machine is.

Containers provide a consistent environment, similar to Kubernetes. Through a consistent environment, developers can reduce bugs caused by environmental differences and spend more time on developing new features.

From Google to Kakao and IVWorks

According to Google, everything from Gmail to YouTube and Google Search are executed in containers. Google creates billions of containers every week. One among the many examples of sharing includes the release of the design source of internal tools in the Kubernetes project. Google initiated the Kubernetes project, where all application software are transformed into containers.

Kakao Corporation, famous for KakaoTalk, has several services that are executed in containers. The machine learning platform developed by LG Electronics is also managed by container-based Kubernetes. Thus, numerous companies have adopted container architecture and Kubernetes in their platforms for application software.

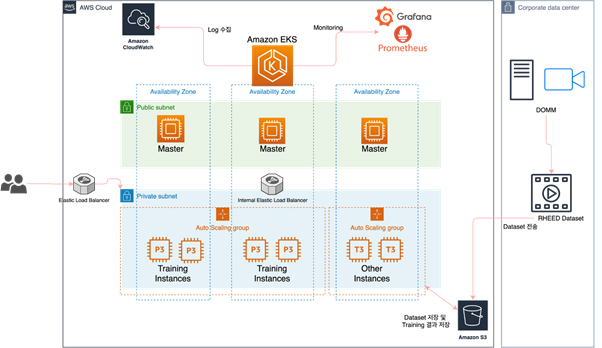

IVWorks is also actively using containers and Kubernetes to create a platform in the semiconductor field (DOMMTM). IVWorks uses containers to operate its application software; moreover, as shown above, it manages its containers by deploying the Kubernetes platform on the cloud service of Amazon called Amazon Web Services (AWS). Elastic Kubernetes Service (EKS), the fully managed Kubernetes service of AWS, has enabled high availability through multiple availability zones while reducing administrative burdens. In addition, the auto-scaling of AWS secures scalability even when the workload increases rapidly. Finally, Amazon CloudWatch is being actively utilized to collect EKS logs, and the EKS monitoring uses Grafana and Prometheus, which have practically become the standards.

Several tools have been designed to improve the ease of machine learning tasks in Kubernetes. In the next article, we will review Kubeflow, which enables easy and intuitive deployment of machine learning workflows in the Kubernetes environment.

Jun-Suk Chang l Artificial Intelligence team

참고문헌